Histories of the Digital Now

Walk into any given gallery or museum today, and one will presumably encounter work that used digital technologies as a tool at some point in its production, whether videos that were filmed and edited using digital cameras and post-production software, sculptures designed using computer-aided design, or photographs as digital prints, to name just a few examples. Yet these works are not typically understood as digital art per se, since they use digital technologies as a production tool rather than a medium. Instead, digital art might be defined as art that explores digital technologies as a medium by making use of its medium’s key features, such as its real-time, interactive, participatory, generative, and variable characteristics, or by reflecting upon the nature and impact of digital technologies.

The definition of digital art has continuously evolved since the art form emerged. In the 1960s and ’70s, digital art consisted mostly of algorithmic drawings in which the results of artist-written code were drawn on paper by pen plotters, and computer-generated films that also involved artistic use of programming languages. While these works take the form of what seem to be more traditional drawings or films, their creators did not simply use digital technologies as a production tool but deeply engaged with the digital medium and the potential of its underlying code. From the 1980s to the early 2000s, digital art was predominantly understood as digital-born art; that is, art created, stored, and distributed via digital technologies. Digital artworks increasingly began to involve the features that we understand as characteristic of the art form today, consisting of software and installations or Internet art that is real time, interactive, process-oriented, and performative. While not all of these characteristics are exclusive to digital art and can also feature in different types of performative events or video art, they are not intrinsic elements of objects such as digital photographs or prints.

As digital technologies have infiltrated almost all aspects of art-making today, many artists, curators, and theorists have pronounced an age of “post-digital” and “post-internet” art. These terms attempt to describe a condition of artworks that are conceptually and practically shaped by the internet and digital processes—taking their language for granted—yet often manifest in the material form of objects such as paintings, sculptures, or photographs that speak about the digital medium and would not be possible without it.

As the language of the digital has become increasingly pervasive, we tend to associate the word “programmed” with the use of digital technologies. Yet throughout the history of art, artists have used programs—rule sets and abstract concepts—to create their work, employing mathematical principles to drive forms and ideas or establishing rules to explore structures and colors. Programmed: Rules, Codes, and Choreographies in Art, 1965–2018 traces some of these practices over the past fifty years, exploring the effects, creative potential, as well as limits of instruction- and rule-based art-making. The exhibition draws historical connections between conceptual, video, and contemporary digital art that may not be immediately obvious. The show covers a broad range of works, including paintings, weavings, drawings, dance scores, and software, as well as early light and TV sculptures from the 1960s and large-scale video and immersive installations. While not all the works are technological, they are still informed by the histories of art, science, and technology. In a world that has increasingly become algorithmically coded—from our conversations with our smart devices to our financial markets—it seems important to look at the aesthetic and social impact of these codes and ask, what kinds of programs do we create to express ourselves or to govern the world we live in?

Both of the exhibition’s two sections examine different understandings of a program through an exploration of the historical trajectories that have informed today’s digital art. “Rule, Instruction, Algorithm” connects digital art to the rule-based conceptual artistic practices that predate digital technologies, with their emphasis on instructions and ideas as a driving force behind the work. The exhibition’s other section, “Signal, Sequence, Resolution,” focuses on the coding and manipulation of image sequences, the television, signals, and image resolution, thereby pointing to digital art’s origins in the history of moving images and the apparatuses of illusion and immersion.

Whether analog or digital, the works in Programmed have been shaped as much by the history of science and technology as by art-historical movements and influences. A brief survey of that technological history and some of the ways that artists engaged with emerging technologies will help to situate the specific historical trajectories that are the focus of the exhibition.

A Brief History of (Digital) Art and Technology

The years following World War II were formative in the evolution of digital media, marked by major theoretical and technological developments. In July 1945, the Atlantic Monthly published the essay “As We May Think” by Vannevar Bush, the American engineer and scientist who, during the war, headed the federal Office of Scientific Research and Development, which oversaw the Manhattan Project.Vannevar Bush, “As We May Think,” Atlantic Monthly, July 1945.Envisioning a future in which the focus of science and technology would be on serving, rather than destroying, humankind, Bush describes a device he calls “memex,” a desk with translucent screens that would allow users to browse documents and to create their own trail through a body of documentation. Bush imagines that the content—books, periodicals,, images—could be purchased on microfilm, ready for insertion. The user could also input direct data. Bush’s specific device was never built, but his conception of it was profoundly influential in shaping the history of computing. The memex is now commonly acknowledged as a conceptual forebear to the electronic linking of materials and, ultimately, to the internet as a huge, linked, globally accessible database. In 1946, the University of Pennsylvania presented the world’s first digital computer, known as ENIAC (Electronic Numerical Integrator and Computer), which filled an entire room; five years later, the first commercially available digital computer, UNIVAC, which was capable of processing numerical as well as textual data, was patented. By then, American mathematician Norbert Wiener had coined the term cybernetics, from the Greek kybernetes (“governor” or “steersman”), to describe the emerging field of science devoted to the comparative study of different communication and control systems, such as the computer and the human brain. Artists immediately saw the creative potential of electronic control systems and began to experiment with cybernetic art, such as using feedback loops in responsive sculptures.

The 1960s—the decade in which the earliest works exhibited in Programmed were created—turned out to be particularly important for the history of digital technologies, a time when the groundwork was laid for much of today’s technology and its artistic exploration. Bush’s basic ideas were developed further by Theodor Nelson, who in 1961 coined the words hypertext and hypermedia to describe a space of writing and reading where texts, images, and sounds could be interconnected electronically and linked by anyone contributing to a networked docuverse. Nelson’s hyperlinked environment was branching and nonlinear, allowing readers and writers to create and choose their own paths through the information. His concepts obviously anticipated the networked transfer of files and messages over the internet, which originated around the same time. The Soviet Union’s launch of Sputnik in 1957 had prompted the United States to create the Advanced Research Projects Agency (ARPA) within the Department of Defense in order to maintain a leading position in technological innovation. In 1964, the RAND Corporation, the foremost think tank of the Cold War era, developed a proposal for ARPA that conceptualized the internet as a communication network without central authority. By 1969, the infant network—named ARPANET, after its Pentagon sponsor—was formed by four of the “supercomputers” of the time: at the University of California, Los Angeles; the University of California, Santa Barbara; the Stanford Research Institute; and the University of Utah.

The end of the 1960s saw the birth of yet another important concept in computer technology and culture: the precursors of today’s information space and graphical user interface. In late 1968, Douglas Engelbart of the Stanford Research Institute introduced the ideas of bitmapping, windows, and direct manipulation through a mouse. His concept of bitmapping was groundbreaking in that it established a connection between the electrons flowing through a computer’s processor and an image on the computer screen. A computer processes in pulses of electricity that manifest themselves in either an “on” or “off” state, commonly referred to as the binaries “one” and “zero.” In bitmapping, each pixel of the computer screen is assigned small units of the computer’s memory (bits), which can also manifest themselves as on or off and be described as one or zero. The computer screen can thus be imagined as a grid of pixels that are either lit or dark, creating a two-dimensional space. The direct manipulation of this space by the user’s hand was made possible through Engelbart’s invention of the mouse. The basic concepts of Engelbart and of Ivan Sutherland, whose interactive display graphics program Sketchpad of 1963 was crucial in enabling computer graphics, were further developed by Alan Kay and a team of researchers at Xerox PARC in Palo Alto, California. Their work resulted in the creation of the graphical user interface and the “desktop” metaphor with its layered “windows” on the screen, which was ultimately popularized by Apple’s introduction of its Macintosh computer. While computers and digital technologies were by no means ubiquitous in the 1960s and ’70s, there was a sense that they would change society. It is not surprising that systems theory—encompassing ideas from fields as diverse as the philosophy of science, biology, and engineering—became increasingly important during these decades. The systems approach during the late 1960s and into the ’70s was broad in scope but deeply inspired by technological systems, and addressed issues ranging from social conditions to notions of the art object.

Artists have always adopted and reflected upon the technologies of their time, and they quickly became interested in exploring the theories and concepts behind the advances in digital computing. The 1950s and ’60s saw a surge of participatory and/or technological art, created by artists such as Ben Laposky and John Whitney Sr.; John Cage, Alan Kaprow, and the Fluxus movement; and groups such as Independent Group / IG (1952–54: Eduardo Paolozzi, Richard Hamilton, William Turnball et al.); ZERO (1958: Otto Piene, Heinz Mack et al.); GRAV/Groupe de Recherche d’Art Visuel (1960–68: François Morellet, Julio Le Parc et al.); and The Systems Group (1969: Jeffrey Steele, Peter Lowe et al.).

The fact that the relationship between art and computer technology at the time was often conceptual was largely due to the inaccessibility of the technology. Some artists were able to use discarded military computers while others gained access to computer technology through the universities where they worked. Wanting to forge what he described as an effective collaboration between engineers and artists, electrical engineer Billy Klüver founded Experiments in Art and Technology (EAT) in 1966 at Bell Labs, where he worked. Klüver developed joint projects with artists such as Andy Warhol, Robert Rauschenberg, Jean Tinguely, John Cage, and Jasper Johns that were first seen in performances in New York and ultimately at the Pepsi-Cola pavilion at the World Expo ’70 in Osaka, Japan. EAT was an early instance of the complex collaboration between artists, engineers, programmers, researchers, and scientists that would become a hallmark of digital art. Other artists who produced groundbreaking art under the auspices of Bell Labs at the time included Kenneth C. Knowlton, A. Michael Noll, Max Mathews, and Lillian Schwartz.

The 1960s also saw important exhibitions centered on art’s relationship to emerging technologies. A series of five international exhibitions organized in Zagreb between 1961 and 1973, under the term Nove tendencije (New Tendencies) were used to advance new concepts of art for the postwar era. They included the program “Computer and Visual Research” as part of the fourth exhibition, Tendencije 4 (1968–69), which highlighted the computer as a medium for artistic creation. The first two exhibitions of computer art were held in 1965: Generative Computergrafik, showing work by Georg Nees, at the Technische Hochschule in Stuttgart, Germany, in February, followed in April by Computer-Generated Pictures, featuring work by Bela Julesz and A. Michael Noll, at the Howard Wise Gallery in New York. Although their works resembled abstract drawings and seemed to replicate aesthetic forms that were very familiar from traditional media, these artists captured essential aesthetics of the digital medium in outlining the basic mathematical functions that drive any process of “digital drawing.” In 1968, the exhibition Cybernetic Serendipity at the Institute of Contemporary Arts in London presented works—ranging from plotter graphics to light-and-sound environments and sensing “robots”—that today may seem clunky and overly technical but which nonetheless anticipated many of the important characteristics of the digital medium. Some works focused on the aesthetics of machines and transformation, such as painting machines and pattern or poetry generators. Others were dynamic and process-oriented, exploring possibilities of interaction and “open” systems. In 1970, American art historian and critic Jack Burnham organized the exhibition Software – Information Technology: Its New Meaning for Art at the Jewish Museum in New York. In addition to featuring the work of artists such as Agnes Denes, Joseph Kosuth, Nam June Paik, and Lawrence Weiner—all of whom are included in Programmed—the show also exhibited the prototype of Theodor Nelson’s hypertext system, Xanadu.

Using the new technology of the time, such as video and satellites, artists in the 1970s also began to experiment with live performance and networks that anticipated the interactions now taking place on the internet and through the online streaming of video and audio. The focus of these artists’ projects ranged from the application of satellites for the mass dissemination of a television broadcast to the aesthetic potential of video teleconferencing and the exploration of a real-time virtual space that collapsed geographic boundaries. At Documenta 6 in Kassel, Germany, in 1977, Douglas Davis organized a satellite telecast to more than twenty-five countries, which included performances by Davis himself, Nam June Paik, Fluxus artist and musician Charlotte Moorman, and German artist Joseph Beuys. In the same year, a collaboration between artists in New York and San Francisco resulted in the Send/Receive Satellite Network, a fifteen-hour, two-way interactive satellite transmission between the two cities. Also in 1977, what became known as the world’s first interactive satellite dance performance—a three-location, live-feed composite performance involving performers on the East and West coasts of the United States—was organized by Kit Galloway and Sherrie Rabinowitz, in conjunction with NASA and the Educational Television Center in Menlo Park, California. The project established what the creators called "an image as place," a composite reality that immersed performers in remote places into a new form of “virtual” space.

Throughout the 1970s and ’80s, painters, sculptors, architects, printmakers, photographers, and video and performance artists increasingly began to experiment with new computer-imaging techniques. During this period, digital art evolved into the multiple strands of practice that would continue to diversify in the 1990s and 2000s, ranging from more object-oriented works to pieces that incorporated dynamic and interactive aspects. With the advent of the World Wide Web in the mid-1990s, digital art found a new form of expression in net art, which became an umbrella term for numerous forms of artistic explorations of the internet. In the early 2000s, net art entered a new phase when artists began to critically engage with the platforms associated with Web 2.0 and social media, producing work on social networking sites such as Facebook, YouTube, Twitter, and Instagram. As digital technologies became part of the objects surrounding us—forming the so-called internet of things—and familiarity with the language of the digital continued to grow, artists began to engage in practices that are now referred to as post-digital, creating works across a range of media that are deeply dependent on digital technologies in their material form.

Rule, Instruction, Algorithm

The works exhibited in Programmed have to be seen in the context of the technological, scientific, and art-historical developments briefly outlined above. What is now understood as digital art is embedded in complex and multifaceted histories that interweave several strands of artistic practice. One of these art-historical lineages is explored in the “Rule, Instruction, Algorithm” section of Programmed, which connects early rule- and instruction-based art forms, such as conceptual art, to algorithmic art and art practices that set up open technological systems.

Among the earliest works in the show are screen prints by Josef Albers, made long after the artist emigrated to the United States from Germany, where he had been an instructor at the Bauhaus, the art school shuttered by the Nazis in 1933 whose innovative approach to design would nevertheless inspire calls for a Digital Bauhaus—unifying art, design, and technology—in arts and academia from the 1990s onwards. Albers was particularly interested in color theory and investigating the perceptual changes in hue caused by placing different colors next to each other. In the works from his Homage to the Square and Variant series exhibited in the show, he developed rules for nesting colored squares and rectangles to emphasize how our perception of a single color—its hue, saturation, and transparency—varies depending on its proximity to and interaction with adjacent colors. Albers work is paired with John F. Simon Jr.’s Color Panel v1.0 (1999), a work of software art based on the Bauhaus experiments with color and displayed on a laptop modified by the artist. Dividing the screen into five rectangles, the software written by Simon encodes variations of transparency and color coding and mixing. One of the rectangles is a programmed version of the “transparency problem” that Albers posed to his students, asking them to mix intermediate colors to make it appear that one shape overlay another. In Color Panel v1.0, it is the algorithm that mixes the colors to simulate transparency.

Instruction- and rule-based practice, as one of the historical lineages of digital art, featured prominently in art movements such as Dada (which peaked from 1916 to 1920), Fluxus (named and loosely organized in 1962), and conceptual art (1960s and ’70s), each of which incorporated a focus on concept, event, and audience participation, as well as variations of formal instruction. The idea of rules being a process for creating art also has a clear connection with the algorithms that form the basis of all software and every computer operation: a procedure of formal instructions that accomplish a result in a finite number of steps. Just as with the combinatorial and rule-based processes of Dada poetry or Fluxus performances, the basis of any form of computer art uses the instruction as a conceptual element.

A large group of works within “Rule, Instruction, Algorithm” explores the connection between programming and conceptual artists, who saw the concept of the idea as a driving force behind their own artistic practices. Among the most prominent conceptual artists, Sol LeWitt created extensive bodies of idea- and instruction-based work in mediums ranging from drawings, photographs, and prints to sculptures that he referred to as “structures.” His wall drawings consist of instructions written in natural language that are executed as drawings at the specific exhibition site. Leaving the execution of the work to someone other than the artist was central to LeWitt’s notion of conceptual art: “In Conceptual art the idea or concept is the most important aspect of the work. When an artist uses a conceptual form of art, it means that all of the planning and decisions are made beforehand and the execution is a perfunctory affair. The idea becomes a machine that makes the art.”Sol LeWitt, “Paragraphs on Conceptual Art,” Artforum, June 1967, p. 80.

In Programmed, one of the four walls of LeWitt’s Wall Drawing #289 (1976), which implements the instruction “24 lines from the center, 12 lines from the midpoint of each of the sides, 12 lines from each corner,” is juxtaposed with Casey Reas’s {Software} Structure #003 A and #003 B (2004/2016). Reas’s software executes the instructions, “A surface filled with one hundred medium to small circles. Each circle has a different size and direction, but moves at the same slow rate. Display: A. The instantaneous intersections of the circles; B. The aggregate intersections of the circles.” Reas’s {Software} Structures explicitly reference the work of LeWitt and explore the relevance of conceptual art to the idea of software art. The artist starts his process by writing textual descriptions that outline dynamic relations between visual elements and then implements them as software. Reas is also well known for having created, together with Ben Fry, the programming language Processing, an open-source development environment using the Java language that strives to teach nonprogrammers the fundamentals of computer programming in a visual context.

It is no coincidence that instruction-based practices of the 1960s such as conceptual art developed at the same time when artists began to use computers to create early algorithmic art. The pioneers of this art form wrote code that was stored on punch cards and then run through a computer to drive pen plotters that would create “digital drawings” on paper. Early practitioners became known as Algorists and included artists Harold Cohen, Herbert Franke, Manfred Mohr, Vera Molnar, Frieder Nake, and Roman Verostko, as well as Chuck Csuri, Frederick Hammersley, and Joan Truckenbrod, each of whose work is on view in Programmed. Csuri created Sine Curve Man (1967), known as the first figurative computer drawing done in the United States, at Ohio State University in collaboration with programmer James Shaffer using an IBM 7094, considered one of the most powerful computers of the early 1960s. The 7094 was employed by NASA in both the Gemini and Apollo space programs, and it was used in early missile defense systems as well. The output of the 7094 consisted of 4-×-7-inch “punch cards” that stored information to drive a drum plotter, specifying when to pick the pen up, move it, and put it down, as well as when the end of a line had been reached, and so on.

Other instruction-based practices originating in the 1960s were the events and “happenings” of the international Fluxus group of artists, musicians, and performers, which were also often based on the execution of precise instructions. Their fusion of audience participation and event can be seen as a precursor of the interactive, event-based nature of many of today’s computer artworks. The concepts of the found element and instructions in relation to randomness also formed the basis of the musical compositions of vanguard American composer John Cage, whose work in the 1950s and ’60s is most relevant to the history of digital art, anticipating numerous experiments in interactive art. Cage described structure in music as its divisibility into successive parts, and often filled the pre-defined structural parts of his compositions with found, preexisting sounds. In Programmed, the connection between musical scores and instruction-based art is evinced in the work Dance (1979), a collaboration between dancer and choreographer Lucinda Childs and Sol LeWitt, with a score by Philip Glass. Projected onto the gallery floor below video documentation of Dance are Childs’s diagrams that outline the movements of the dancers performing to Glass’s music, which—similar to that of Cage—uses repetitive structures.

Programmed reveals the use of instructions and language as material in a group of works in which instruction and form collapse into one another and become one and the same. Both Lawrence Weiner’s HERE THERE & EVERYWHERE (1989)—comprised of four text segments that invite viewers to imagine places—and Joseph Kosuth’s Five Words in Green Neon (1965)—literally spelling out its title in green neon—use language as material and medium, highlighting its potential to generate art. The two works are juxtaposed with W. Bradford Paley’s CodeProfiles (2002), a work of software art that displays the very code that makes the code itself visible on the screen. As in Kosuth’s piece, viewers are looking at the language that creates the work. Paley also shows the role of the reader, writer, and computer in the construction of the piece: an amber line indicates how viewers can read the code line by line, a white line traces how Paley wrote the code, and a green line shows how the computer executes the code to render it visible on the screen. CodeProfiles thereby underscores that digital art is based in rules and instruction, as is most conceptual art.

The potential of instructions and algorithms is further explored in a group of works by Ian Cheng, Alex Dodge, and Cheyney Thompson that engage with the generative qualities of rule-based systems. Theorist, artist, and curator Philip Galanter defines generative art as any art practice in which the artist uses a system, such as a set of natural-language rules, biological processes, mathematical operations, a computer program, a machine, or other procedural invention, that is set into motion with some degree of autonomy, thereby contributing to or resulting in a completed work of art.Philip Galanter, “What Is Generative Art? Complexity Theory as a Context for Art Theory,” Proceedings of the International Conference on Generative Art, Milan, Italy (Milan: Generative Design Lab, Milan Polytechnic University, 2003).Generative art practices are used in a range of communities, among them computerized music, computer graphics and animation, VJ culture, and glitch art. They can be traced back to ancient forms such as the symmetric composition rules for generating patterns, for example the masterpieces found in the Islamic world, one of the cradles of mathematical innovation. Not coincidentally, the word algorithm has its roots in Arabic. In Programmed, the generative patterns of the artworks on view are set in motion by systems, such as a conversation between artificial intelligences or the Drunken Walk algorithm, which is used in fields ranging from economics to chemistry and physics to map unpredictability. These algorithmic generative processes for creating patterns also play a crucial role in one of the most important inventions in the history of computing, the Jacquard loom. Created by Joseph Marie Jacquard in 1804, the loom revolutionized the process of weaving through the use of programs that were stored as punched cards to automate the generative creation of fabrics. Charles Babbage and Herman Hollerith would later use programming with punch cards in their conceptualization of computers. Thus, one could argue that generative art made the invention of computers possible, a connection implied by the Jacquard weavings of both Rafaël Rozendaal and Mika Tajima in the exhibition.

Signal, Sequence, Resolution

While the works brought together in this section of the exhibition also employ rules and instructions in their creation, they trace a historical lineage that is distinct from conceptual practices, focusing instead on concepts of light and the moving image, a trajectory that originates with early kinetic art and continues through to new digital forms of cinema and interactive notions of television. Embedded in this trajectory is the evolution of different types of optical environments, which have been researched by scholars of art and technology such as Oliver Grau and Erkki Huhtamo.See Oliver Grau, Virtual Art: From Illusion to Immersion (Cambridge, MA: MIT Press, 2003); and Erkki Huhtamo, Illusions in Motion: Media Archaeology of the Moving Panorama and Related Spectacles (Cambridge, MA: MIT Press, 2013).

A group of pre-digital works from the 1960s plays with the concept of the electronic signal and draws attention to its function as a carrier of instructions and visual information. For his work Magnet TV (1965), Nam June Paik, a pioneer of video art, placed an industrial-size magnet on top of a TV so that the magnetic interference with the television’s reception of electronic signals distorts the picture into abstract forms. In Thrust (1969), Earl Reiback similarly draws attention to the creation of images through electronic signals by emptying the cathode-ray tube monitor of a TV and replacing it with sculptural elements. A different engagement with light and signal unfolds in Jim Seawright’s Searcher (1966), an early kinetic sculpture whose searchlight both generates light and reacts to it. In scientific terms, kinetic energy is the energy possessed by a body by virtue of its motion, and kinetic art, which peaked from the mid-1960s to the mid-1970s and was often inspired by ideas of cybernetic control systems, frequently produced movement through machines activated by the viewer.

The concept of the digital moving image and digital cinema has been shaped by several strands of media histories and practices, ranging from animation and the live-action movie to immersive environments and the spatialization of the image. We now associate cinema mostly with live action, which is only one of the many trajectories of the moving image’s history. Another trajectory has its roots in the early moving images of the nineteenth century that were based on hand-drawn images and viewed through pre-cinematic devices, such as the Zoetrope and the Kinetoscope. This strand of the history of cinema would develop into animation, which has also gained new momentum and popularity through the possibilities of the digital medium. Continuing the pioneering work in computer-based graphics and filmmaking by figures such as John Whitney Sr. and Chuck Csuri in the 1960s, Bell Labs artist-in-residence Lillian Schwartz created three groundbreaking films on view in Programmed.John Whitney Sr., often called “the father of computer graphics,” used old analog military computing equipment to create his short film Catalog (1961), a catalogue of effects on which he had been working for years. Whitney’s later films Permutations (1967) and Arabesque (1975) secured his reputation as a pioneer of computer filmmaking. Chuck Csuri's film Hummingbird (1967), for which more than 30,000 individual images generated by a computer were drawn on film by means of a microfilm plotter, also is considered a landmark of computer-generated “animation.”For her film Enigma (1972), Schwartz used a programming macro language that divides the screen into a grid of pixels and generates images as patterns of dots. Through rapid shifts between rectangular forms, she creates the perception of strobing color. In Newtonian I and Newtonian II (both 1978), Schwartz draws upon mathematical systems to create the illusion of three-dimensional images.

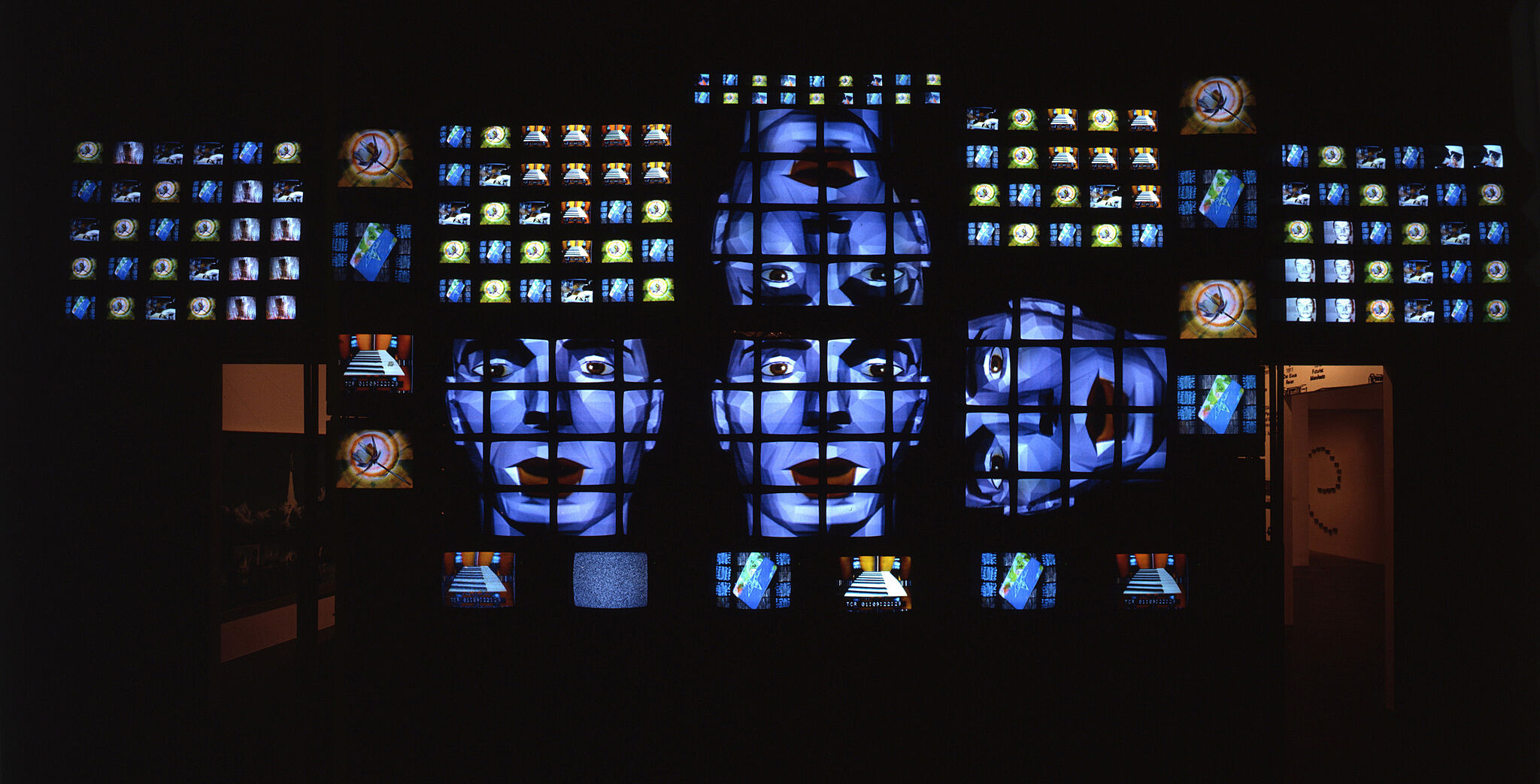

In his seminal book of 1970, Gene Youngblood chronicled artists’ experimentation with spatializing moving images in a physical environment as part of his broader theorization of a new “expanded cinema.”Gene Youngblood, Expanded Cinema (New York: Dutton, 1970).Both Nam June Paik’s Fin de Siècle II (1989), one of the centerpieces of the current exhibition, and Mynd (2000) by the Icelandic artist Steina, another pioneer of video art and co-founder of The Kitchen in New York, explore this creation of visual spaces in their resequencing of images. Consisting of a wall of more than two hundred video monitors, Fin de Siècle II choreographs sequences from music and art videos, anticipating the multi-channel “remix” of videos on the web, a version of which Paik could be said to have presciently envisioned as early as 1974 in what he called an “electronic super highway.”In his report “Media Planning for the Post-Industrial Society,” which Paik submitted to the Art Program of the Rockefeller Foundation, itself an early supporter of artists working in “new media” such as video and television, the artist explicitly outlines his vision for an “electronic super highway.”Steina’s Mynd creates a different kind of image space by surrounding viewers with images of the Icelandic landscape that are subjected to different kinds of processing through the video-editing software Image/ine, thereby contrasting analog and digital image creation and processing. The spatialization of images finds yet another expression in Jim Campbell’s Tilted Plane (2011), a room-size installation of hundreds of hanging LED lights that form a grid of “pixels,” which in turn functions as a low-resolution video display. A two-dimensional video of birds taking off and landing displayed on the three-dimensional tilted plane of lights becomes a flickering abstraction as the viewer moves into the room. Campbell’s exploration of resolution and pixelation can be traced to Pointillism, the painting technique developed by Georges Seurat and Paul Signac in 1886 in which distinct tiny dots of color, mostly the same size, are applied to canvas to form an image.

In more conventional cinema, digital technologies are playing an increasingly important role as a production tool; even if a movie is not a special-effects extravaganza, images that appear entirely realistic have often been constructed through digital manipulation. However, the use of digital technologies as a tool in the production of a linear film does not fundamentally challenge the language of film. Yet the digital medium has the potential to redefine the very identity of cinema and the moving image in many ways. From the possibilities of instant copying and remixing to the seamless blending of disparate visual elements into a simulated form of reality, the medium challenges traditional notions of realism and questions qualities of representation. In addition to altering the status of representation and expanding the possibilities for creating moving images, be they live action or animation, the digital medium has also profoundly affected narrative and non-narrative film through its inherent potential for interaction.

The element of interaction in film and video is not intrinsic to the digital medium and has already been employed by artists and performers who experimented with light in their projection, for example by incorporating the audience in the artwork through shadow play, an ancient form of storytelling and entertainment. Closed-circuit television and live video captures that made the audience the “content” of the projected image continued these explorations. What is considered to be the world’s first interactive movie, KinoAutomat by Radúz Činčera, was first shown in the Czech pavilion at the 1967 World Expo in Montreal. Shot in alternative versions of image sequences, the film required the audience to vote on how the plot would unfold. Yet digital media have inarguably exploded the potential for interaction, taking these earlier experiments to new levels and leading to new forms of artistic exploration. Featured in Programmed is Lynn Hershman Leeson’s Lorna (1979–84), the first artwork done on interactive LaserDisc. The work unfolds on a television placed within a room-size installation that mirrors the space shown on screen, and its branching narrative is navigated by viewers via a remote control. The television is both the system of interaction and the only means of mediation for the video’s protagonist, Lorna, an agoraphobic isolated in her apartment. The disruptions in the non-linear narrative mirror the instabilities of Lorna’s psychological state.

As digital technologies have impacted nearly all aspects of daily life, artists have not only used them for the creation of new forms and image spaces but also for a critical engagement with the social, cultural, and political impacts of these technologies. A group of works in Programmed explore our increasingly encoded realities and the biases that may be inscribed in them. Keith and Mendi Obadike’s The Interaction of Coloreds (2002), for example, uses satire to highlight how customers’ skin color factors into online commerce, questioning assumptions about the internet as supposedly color-blind. Jonah Brucker-Cohen and Katherine Moriwaki’s America’s Got No Talent (2012) creates an interactive data visualization of the Twitter feeds surrounding popular network-TV talent shows, drawing attention to the ways in which opinion and sentiment are affected by reality television’s use of social media. The artworks exhibited in this group point to the profound changes that technologies have brought about in gathering, processing, and classifying data, thereby altering the frameworks for communication and the fabric of society as a whole.

***

Digital technologies and interactive media have expanded the range of artistic practices, from advancing concepts originally explored in conceptual art to generating new possibilities for moving-image production and the creation of immersive visual spaces. In the process they have challenged traditional notions of the artwork, audience, and artist. The artwork is often transformed into an open structure in a process that relies on a constant flux of information and engages the viewer in the way a performance might do. The public or audience becomes a participant in the work, reassembling the textual, visual, and aural components of the project. Rather than being the sole creator of a work of art, the artist often plays the role of a mediator or facilitator for audiences’ interactions with and contributions to the artwork. The creation process of digital art itself frequently relies on complex collaborations between the artist and a team of programmers, engineers, scientists, and designers. As such, digital art has brought about work that often defies easy categorization, collapsing boundaries between the disciplines of art, science, technology, and design. Presenting examples of the ways in which artistic practice has engaged with rules, codes, and choreographies for the past fifty years, Programmed points to the rich and complex histories of art, science, and technology, and the ways in which they together have driven and nurtured the evolution of the others—and in the process, have changed how we construct and perceive our societies and cultures at large.